Robotics URJC

Personal webpage for PhD Students.

View the Project on GitHub RoboticsLabURJC/2019-phd-alberto-martin

Reinforcement Learning

- Tabular Solutions Methods: the methods can often find exact solutions, that is, they can often find exactly the optimal value function and the optimal policy.

- Multi-armed Bandits: special case with only a single state

- Finite Markov Decision Processes: general problem formulation for Reinforcement Learning problems.

- Dynamic Programming: method to solve FMDP. Needs a model of environment.

- Monte Carlo Methods: method to solve FMDP. Model not required, conceptually simple but are not well suited for step-by-step incremental computation.

- Temporal-Difference Learning: method to solve FMDP. Model not required and are fully incremental, but are more complex to analyze.

- Approximate Solution Methods: in such cases we cannot expect to find an optimal policy or the optimal value function even in the limit of infinite time and data; our goal instead is to find a good approximate solution using limited computational resources.

- On-policy Prediction with Approximation

- On-policy Control with Approximation

- On-policy Methods with Approximation

- Eligibility Traces

- Policy Gradient Methods

2017- A Brief Survey of Deep Reinforcement Learning

Classical methods:

- Model-free methods:

- value-based methods: bases upon temporal difference learning, learn value function.

- TD

- Q-learning

- SARSA

- policy-based: directly learn optimal policy.

- policy search

- policy gradient:

- REINFORCE

- actor-critic methods

- value-based methods: bases upon temporal difference learning, learn value function.

- Model-based methods:

Deep Learning methods:

- Model-free methods:

- value-based methods: Deep networks to represent value/Q functions. Estimate the value function, policy is implicit.

- DQN

- Double-Q

- Categorical DQN

- Continuous DQN (NAF (Normalised Advantage Function) or CDQN):

- Deep SARSA

- NEC (Neural Episodic Control)

- policy-based methods: estimate the policy, no value function. Neuronal networks policies, more complex policy search.

- policy search (End-to-End Training of Deep Visuomotor Policies):

- GPS (Guided Policy Search)

- policy gradients(Policy Gradient Methods for RL with function approximation):

- Actor-critic methods (Visual Navigation in Indoor Scenes): estimate the policy and the value function.

- DPG (Deterministic Policy Gradients)

- IPG (Interpolated Policy Gradients)

- A4C, A3C, A2C

- DA2C

- Soft actor-critic

- Q-Prop

- ACKTR

- policy search (End-to-End Training of Deep Visuomotor Policies):

- value-based methods: Deep networks to represent value/Q functions. Estimate the value function, policy is implicit.

- Model-based methods: the agent has access to (or learns) a model of the environment. By a model of the environment, we mean a function which predicts state transitions and rewards. The main downside is that a ground-truth model of the environment is usually not available to the agent. If an agent wants to use a model in this case, it has to learn the model purely from experience.

Current research & challenges:

- Model-based RL: the key idea behind model-based RL is to learn a transition model that allows for simulation of the environment without interacting with the environment directly.

- Exploration vs. Exploitation: one of the greatest difficulties in RL is the fundamental dilemma of exploration versus exploitation: When should the agent try out (perceived) non-optimal actions in order to explore the environment (and potentially improve the model), and when should it exploit the optimal action in order to make useful progress?

- Hierarchical RL: In the same way that deep learning relies on hierarchies of features, HRL relies on hierarchies of policies.

- Imitation Learning and Inverse RL:

- Memory and attention:

- Transfer Learning:

- Benchmarks: one of the challenges in any field in machine learning is developing a standardised way to evaluate new techniques.

2018 - Dexterous Manipulation with Reinforcement Learning: Efficient, General, and Low-Cost

2018 - Learning to Walk via Deep Reinforcement Learning

2018 - An Introduction to Deep Reinforcement Learning

2018 - Deep Reinforcement Learning for robotic manipulation-the state of the art

2018 - Qt-Opt: Scalable Deep Reinforcement Learning for Vision-Based Robotic Manipulation

2017 - Deep Reinforcement Learning for Robotic Manipulation with Asynchronous Off-Policy Updates

Definitions

Multi-armed Bandits

A one-armed bandit is a simple slot machine wherein you insert a coin into the machine, pull a lever, and get an immediate reward. A multi-armed bandit is a complicated slot machine wherein instead of 1, there are several levers which a gambler can pull, with each lever giving a different return. The probability distribution for the reward corresponding to each lever is different and is unknown to the gambler.

The task is to identify which lever to pull in order to get maximum reward after a given a set of trials. This problem statement is like a single Markov decision process. Each arm chosen is equivalent to an action, which then leads to an immediate reward.

Reinforcement Learning Guide: Solving the Multi-Armed Bandit Problem from Scratch in Python

Agent-environment

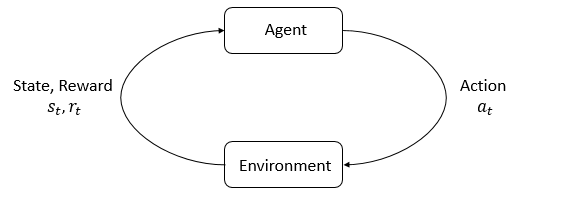

The main characters of RL are the agent and the environment. The environment is the world that the agent lives in and interacts with. At every step of interaction, the agent sees a (possibly partial) observation of the state of the world, and then decides on an action to take. The environment changes when the agent acts on it, but may also change on its own.

The agent also perceives a reward signal from the environment, a number that tells it how good or bad the current world state is. The goal of the agent is to maximize its cumulative reward, called return. Reinforcement learning methods are ways that the agent can learn behaviors to achieve its goal.

States and Observations

A state s is a complete description of the state of the world. There is no information about the world which is hidden from the state. An observation o is a partial description of a state, which may omit information.

Action Spaces

Different environments allow different kinds of actions. The set of all valid actions in a given environment is often called the action space. Some environments, like Atari and Go, have discrete action spaces, where only a finite number of moves are available to the agent. Other environments, like where the agent controls a robot in a physical world, have continuous action spaces. In continuous spaces, actions are real-valued vectors.

Policies

A policy is a rule used by an agent to decide what actions to take. It can be deterministic, in which case it is usually denoted by mu or it may be stochastic, in which case it is usually denoted by pi.

Trajectories

A trajectory T is a sequence of states and actions in the world.

Reward and Return

The reward function R is critically important in reinforcement learning. It depends on the current state of the world, the action just taken, and the next state of the world although frequently this is simplified to just a dependence on the current state, rt = R(st), or state-action pair rt = R(st,at).

The goal of the agent is to maximize some notion of cumulative reward over a trajectory.

The RL Problem

Whatever the choice of return measure, and whatever the choice of policy, the goal in RL is to select a policy which maximizes expected return when the agent acts according to it.

Value Function

It’s often useful to know the value of a state, or state-action pair. By value, we mean the expected return if you start in that state or state-action pair, and then act according to a particular policy forever after. Value functions are used, one way or another, in almost every RL algorithm.

Policy Search: Methods and Applications

Courses

Reinforcement Learning Explained

Deep Reinforcement Learning Nanodegree

OpenAI Spinning Up - rl introduction

Course: CPSC522/Markov Decision Process (UBC)

Course: CS20 Tensorflow for Deep Learning Research (Stanford)

Intro to TensorFlow for Deep Learning

TensorFlow: From Basics to Mastery

Blogs & Resources

Deep Reinforcement Learning: Pong from Pixels

Demystifying Deep Reinforcement Learning

Simple Reinforcement Learning with Tensorflow

Reinforcement Learning: Q-Learning and exploration

A Beginner’s Guide to Deep Reinforcement Learning

Model-based reinforcement learning

Deep Reinforcement Learning: Playing CartPole through Asynchronous Advantage Actor Critic (A3C)

Actor-Critic Methods: A3C and A2C

Soft Actor Critic—Deep Reinforcement Learning with Real-World Robots

Deep Reinforcement Learning for Robotics

Google X’s Deep Reinforcement Learning in Robotics using Vision

Controlling a 2D Robotic Arm with Deep Reinforcement Learning

Reinforcement Q-Learning from Scratch in Python with OpenAI Gym

Reinforcement Learning: Introduction to Monte Carlo Learning using the OpenAI Gym Toolkit

Reinforcement Learning Demystified: Markov Decision Processes