Week 26-27. Migration from the Turtlebot example to the Formula 1

To Do

Migration from the turtlebot example to formula 1Creating a new environment for the formula 1 exampleResolved perception (3 points)- Cleaning and refactoring (ON GOING).

- Replace the behavior of the laser to the camera (ON GOING).

- First prototype with 5 W and 3 V outputs.

Progress

1. Migration of the example

The idea is simple: take the functional code of the Turtlebot and replicate it in the case of Formula 1. This leap involves changing the model of the Turtlebot to the model of the car that has different names in the topics so it is necessary to find out how they are called and change them in the code. In addition, the launch, world and models have been added to the library folder since it will be fed from the same folder as the one used in the previous example. The current status of this point is the correction of the last errors that make the robot not move when the program is started. There are less left :-)

2. Creating a new OpenAI Gym environment

Registering a new environment in the Gym-Gazebo library consists of generating a new entry in the list of environments and performing the corresponding imports of that new example into the different modules, specifically, the __init__.py files of the environments (envs) and the library (gym-gazebo).

The result of the migration can be seen in the following animation where, when the laser detects the wall at the end of the line, it restarts the simulation.

Each of the steps randomly selects an action: 1, 2 or 3. In each one of them, the robot chooses some movements to make: advance, turn left or turn right at a constant linear and angular speed.

# 3 actions

if action == 0: # FORWARD

vel_cmd = Twist()

vel_cmd.linear.x = 20

vel_cmd.angular.z = 0.0

self.vel_pub.publish(vel_cmd)

elif action == 1: # LEFT

vel_cmd = Twist()

vel_cmd.linear.x = 0.05

vel_cmd.angular.z = 0.2

self.vel_pub.publish(vel_cmd)

elif action == 2: # RIGHT

vel_cmd = Twist()

vel_cmd.linear.x = 0.05

vel_cmd.angular.z = -0.2

self.vel_pub.publish(vel_cmd)

It is now time to change the behavior of the laser for the camera :-).

3. Give the robot the resolute perception

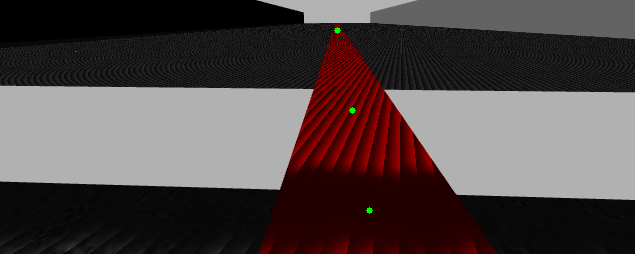

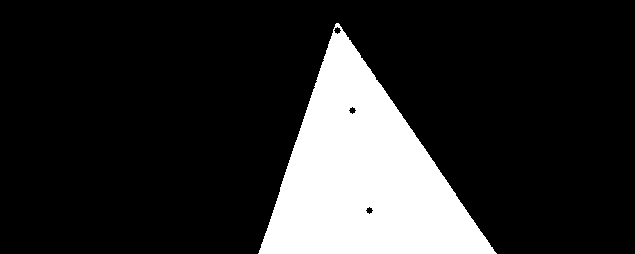

As a first approximation to the network input we want to prove that the DQN algorithm is able to learn the optimal points by giving it as input only 3 points of a 640x480 image (if we work with the whole image) or even with the lower half where the red line to follow is located (640x240). If this learning is successful, it will be tried to train with the image of the complete mask and, later, with the entry in ‘Draw’ format. These 3 initial points refer to different points on the straight line which, if aligned, will be on a straight line and depending on the distance to the red line on a more open or closer curve. In the following image you can see the resulting input image.

With the three coordinates of these points, the distance to the center of the image will be measured to evaluate, based on that distance, how well he is doing.

4. Outputs

The best output of the DQN table will in principle be limited to 5 angular and 3 linear velocity values. Once the algorithm has been trained with these values it will be tested with more output values.

5. Cleaning and refactoring of the example

The starting example of the turtlebot has a somewhat strange structure that is trying, first, to remove the laser component and replicate the same behavior with the camera and repositioning and improving the rest of the components. This initially will not be a task to be solved in a week but it will be improved in each revision of the code.

Working

I am still working on finalizing the adaptation of the turtlebot example to the formula 1 case. The change of the topic makes it not so immediate and requires further adaptation.

Learning

The adaptation is taking longer than I thought but I’m taking away the knowledge of handling ROS topics as well as the operation of the Gym-Gazebo library. I hope that this effort will make possible future problems to be solved more easily :-)