Week 11 - Dataset Expansion with DAGGER Philosophy and Imitation Learning Performance Evaluation

December 9, 2025

Implementation of comprehensive DAGGER-based data collection and analysis of imitation learning outcomes with MobileNet 2.0

This week has seen significant progress in consolidating the DAGGER (Dataset Aggregation) methodology and evaluating the performance of an autonomous driving model trained through imitation learning. The obtained results allow for the identification of both the advances achieved and the persistent limitations of the system.

[Image: Diagram of the dataset composition]

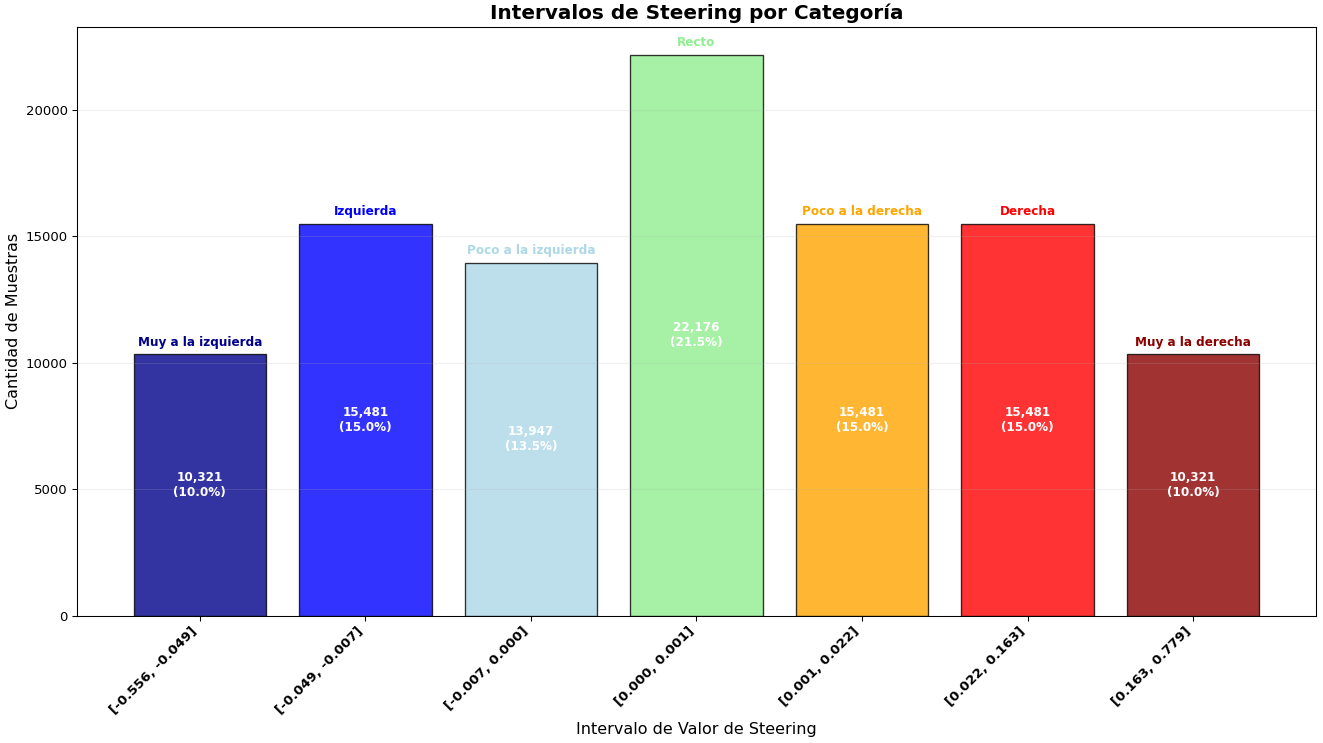

1. Implementation of the DAGGER philosophy and generation of a balanced dataset:

The DAGGER philosophy has been systematically implemented for data aggregation, resulting in a comprehensive dataset consisting of 103,000 images and ramdon bursts: duration between 1 and 3 seconds, steering of two possible values 0.5 and -0.5, and randomized in time. This dataset structurally covers five fundamental driving modalities: direct driving (lane keeping), controlled right turn, controlled left turn, recovery to the right lane from a deviated position in the left lane, and advancement to the center of the right lane from a lateral position. This strategic diversification of scenarios has enabled a significantly improved balance in the distribution of behavior classes, providing a more representative and robust database for training.

[Image: DAGGER driving.]

2. Imitation Learning training with MobileNet 2.0 and initial evaluation:

Using the MobileNet 2.0 architecture as a feature extractor, a policy model was trained through Imitation Learning (IL). Initial results are promising; the autonomous agent is capable of accurately emulating the expert driver's behavior during the first seconds of execution, maintaining a stable trajectory and performing appropriate steering adjustments. However, it is observed that after this initial period, the quality of driving progressively degrades, manifesting in lane-keeping errors and cumulative deviations that ultimately compromise the vehicle's safe operation.

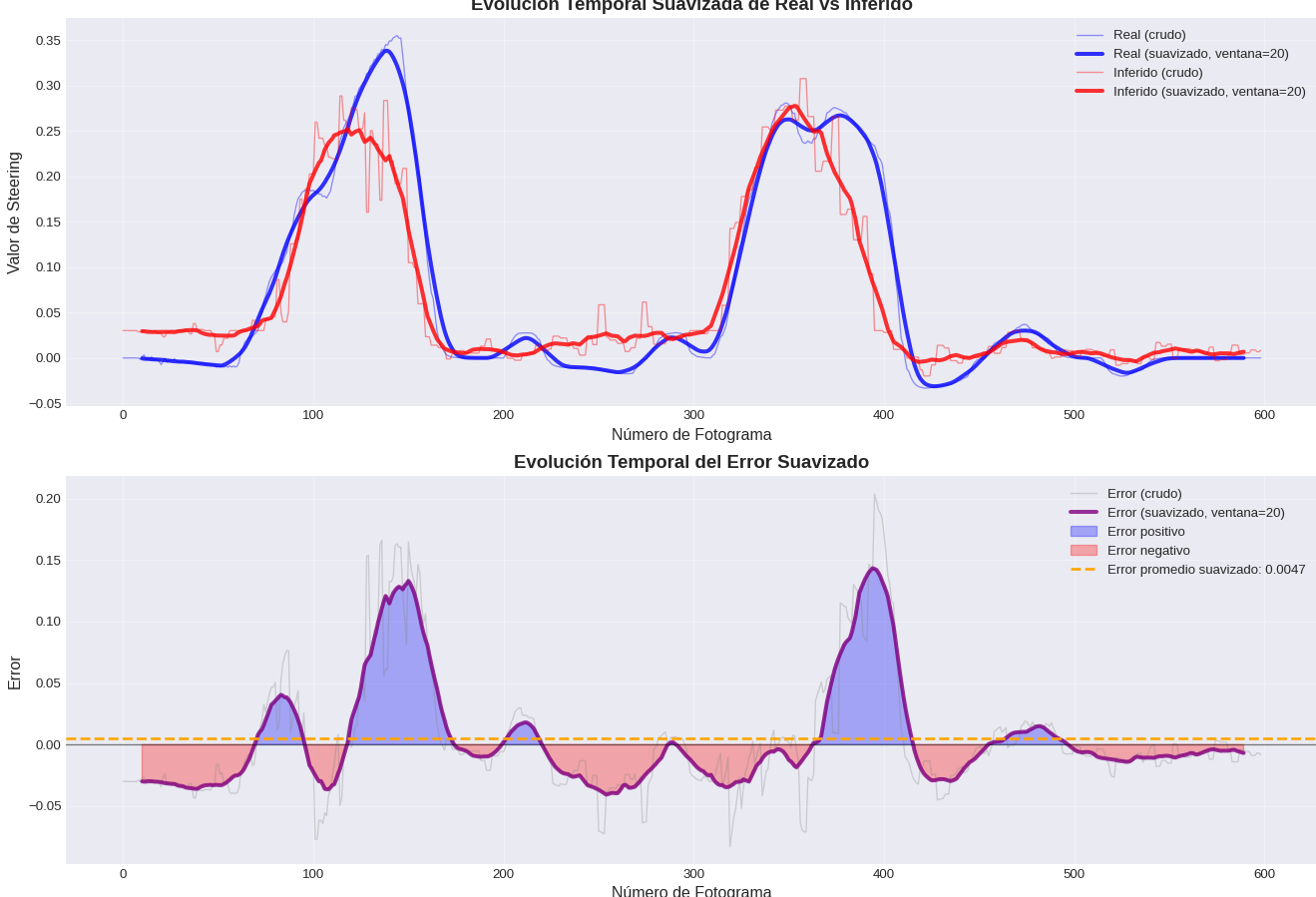

3. Comparative behavior analysis: imitation and error accumulation:

A detailed analysis of the trajectories generated by the expert driver and the trained autonomous agent reveals a critical phenomenon. While the model effectively imitates control actions in the short term, there is a progressive divergence between both trajectories. This divergence indicates a systematic accumulation of error, where small discrepancies in steering prediction are amplified over time. Consequently, the agent, although grounded in the imitation of human behavior, reproduces and propagates these errors, highlighting an intrinsic limitation of the pure imitation approach in the face of environmental variability and noise.

[Image: Autonomous driver in action.]

[Image: Expert driving vs inferenced control and imitation error.]

The findings of this week highlight the success in building a more balanced dataset through DAGGER, but also reveal the pending challenges in the long-term generalization and stability of imitation learning models. The next step will involve investigating mechanisms to mitigate the cumulative error drift, possibly through the integration of real-time feedback or policy refinement strategies based on interaction.